Recently, we discussed “The importance of Closing the Loop on Your Data”. Let’s go a bit deeper on the subject of predictive modeling (some folks use the term AI) and how the nuance of your closed loop data has a significant impact on predictive modeling.

Let’s examine two areas of nuance that so often get overlooked; designing features & defining conversion.

Designing Features

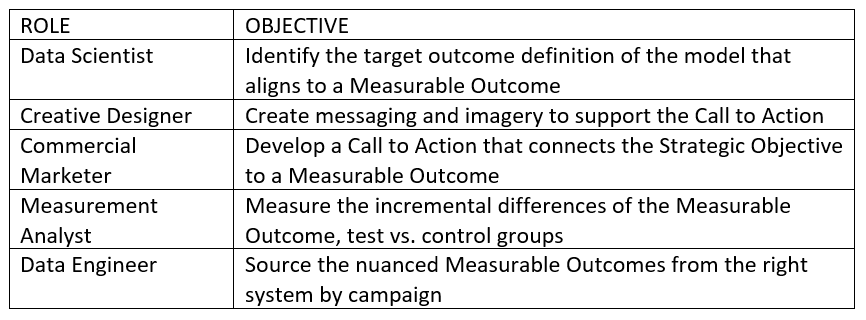

This begins with people curating data. Specifically, there are several people-based roles that need to “come together”. Coming together, means these roles are speaking the same language. That language is in the form of data. Specifically, we refer to this data as features. Features are the characteristics or attribution of successfully (or unsuccessfully) meeting your objectives. For example, these roles may have these objectives;

For Example:

Target Corporations’ now infamous predictive modeling debacle is a great example of how a variety of people-based roles developed features on point of sale data.

At Toovio, we like to say “we cultivate (data) empathy” because experience tells us that the best features usually arise when these people and roles collaborate.

Collaboration specifically means that these roles are designing these features together in the context of a measurable data outcome. In Target’s case the most common outcome is a customer purchasing a specific product.

Defining Conversion

“Aim small, miss small” is a great way to think about defining conversion events. Statistically speaking, predictive models are looking for discrimination so the more nuance we can build into the conversion (or target definition) the better. The most important data feature of defining conversion is time. A few time-based rules to follow when defining conversion;

- The conversion event must occur post stimulus at the customer level

- The conversion event should have an eligible time window (start and end date)

- Consider testing multiple eligible time windows

- The customers you identify conversions for should be collected

Next up is value. Of all the events (i.e. dispositions) you can track the ones you call conversion should have a value associated to it. This is becoming more difficult these days as many digital interactions are behavior based (no dollar-based transaction involved). The commercial marketer is probably the best placed to lead the charge here with critical support from a data engineer. In our experience this is the hardest part of defining conversion and the easiest to overlook in the design phase. It is only through measurement when any mistakes and or oversight in the definition of conversion value become clear.

For Example:

A telco organization may have a large portion of subscribers that don’t use the service. Do we want to stimulate subscriber usage in this group, or do we want to prevent subscriber cancellation? What’s the value associated with these two different conversion events? Once you have that information you will you’ll be able to map measurable outcomes with speed.

To sum it up, moving at the speed of your customer data is challenging and nuanced, but valuable to predictive modeling when done right.